CHAPTER 1

HOW SEARCH ENGINES WORK

Search engines play a crucial role in achieving successful internet marketing. There two major functions that search engines have, the first is crawling and building an index, the other is providing those who have searched with a list of websites they believe are relevant to their searches in a ranked format.

PLEASE FILL OUT THE FORM BELOW AND WE WILL GET BACK TO YOU WITHIN 24 HOURS.

We just need a little info to get started

In a hurry? Give us a call now at 866-955-3287

Crawling and Indexing

Picture the World Wide Web to be a connected series of stops in a large city subway system.

It is the work of the search engines to “crawl” all stops in the city. The stops are different unique documents that are often webpages, but sometimes PDFs, images, or other file types. To be able to crawl the whole city, search engines use the best route they can find links.

Crawling and Indexing

Crawling and indexing all of the numerous documents, files, news, pages, media, and videos on the World Wide Web.

Supplying Answers

Supplying answers to queries of users by listing relevant pages that they have gathered and ranked on the basis of their relevance.

The link structure of the web functions to connect all of the web pages.

Links make it possible for “crawlers” or “spiders” (automated robots of search engines) to access billions of documents that are interconnected on the web.

Immediately the pages are found by search engines and from there decoded, and then some chosen parts of them are stored in massive databases where they are easily recalled anytime they are needed for a search query. To execute the difficult operation of storing numerous pages that can be reached in a few seconds, companies operating search engines have built data centers across the world.

These huge storage facilities house a great number of machines that process information quickly. When you search for anything, you want results fast. Anything less disrupts your experience. That’s why search engines have been programmed to process and supply results within seconds.

Supplying Answers

Search engines are answer machines. When you search anything online, the search engines quickly flip through their series of stored documents and other file types and then do two things: supply only results they consider relevant to your search queries, and, present the results in a ranked format with the ranking on the basis of how popular the websites providing the information are. SEO is therefore used to gain more relevance and popularity. These factors make up efficient, conversion-driven, successful internet marketing.

How is popularity and relevance judged by search engines?

Good internet marketing is often the result of a good ranking by search engines. Relevance to a search engine does not only mean finding the right words on a page. That was during the early days of the web. Back then, search engines only checked for the right words on a page, but this caused the results provided to be of limited value, Today, web gurus have found better techniques of matching results to queries. In this current age of the web, a lot of factors can influence relevance, and we will explain all the important factors in this guide.

Search engines operate under the belief that the more popular a site, document, or page is, the more valuable the information it houses would be. The assumption is fair, judging from the satisfaction of users with search results.

The process of judging relevance and popularity is not manual. The search engines use algorithms (mathematical equations) to separate the crops from the weed (relevance), and to rank the crops on the basis of their quality (popularity).

Often, these mathematical equations incorporate hundreds of variables which are called “ranking factors.”

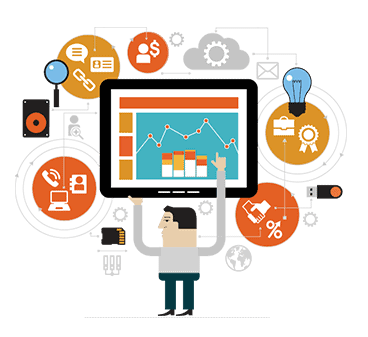

In this example, you can deduce that according to search engines, the most popular and relevant page for the search “universities” is Ohio state’s while Harvard’s is less popular/relevant.

How do I improve my Internet Marketing?

How Internet Marketing Becomes Successful

The complex algorithms of search engines may seem impregnable. Even the engines provide only little clues to achieving improved results or driving more traffic. They little info they have given us on optimization and the effective practices are explained below:

Or, “how search marketers become successful”

The complex algorithms of search engines may seem impregnable. Even the engines provide only little clues to achieving improved results or driving more traffic. They little info they have given us on optimization and the effective practices are explained below:

SEO INFORMATION FROM GOOGLE WEBMASTER GUIDELINES

To get an improved ranking in their search engine, Google recommends the following:

- Make pages primarily user- friendly, not search engine-friendly. Never attempt to deceive your users or display to search engines a different content from what you displayed to users, a practice called “cloaking.”

- Make a site with a distinct ordering and text links. All pages should be accessible from at least a static text link.

- Create a useful site that has enough information, unambiguously and perfectly describe your content. Ensure that your <title> elements and ALT features are perfect and descriptiveCreate a useful site that has enough information to, and write pages that unambiguously and perfectly describe your content. Ensure that your

- With keywords, create URLs that are descriptive and user-friendly. Supply one version of a URL to access a document and use 301 redirects or the rel=”canonical” feature to address contents that are duplicate.

SEO INFORMATION FROM BING WEBMASTER GUIDELINES

To get an improved SEO ranking for your internet marketing, engineers of Bing at Microsoft recommend the following:

- Put a URL structure that is keyword-rich in place.

- Ensure your content is not hidden inside rich media like Ajax, Adobe Flash Player, JavaScript, etc., and ascertain that rich media are not hiding links from crawlers.

- Create content that is rich in keywords and ensure the keywords are what users are searching for. Update your content regularly.

- Let texts you want indexed stand independently. For example, if you need the name of your company to be indexed, don’t put it inside the logo of the company.

Fear Not, Partner in Search Marketing!

As an addition to this advice freely given, after trying different options throughout the 15+ years of search engine existence, search marketers have discovered some cool ways to get details about how search engines rank pages. SEO experts and marketers use the details they have to increase the ranking of their sites and also their clients.

As a surprise, the search engines are found to be in support of the efforts, although there is often a very low public visibility. Representatives and engineers from all the big engines today are attracted by search marketing conferences like Search Engine Strategies, Search Marketing Expo, Distilled, Pubcon, etc. Representatives of search engines also help webmasters by joining some online forums, groups, or participating in blogs.

The Experiment

- Get a new website registered with meaningless keywords (e.g., falopuyhul.com).

- Create several pages on that website and have all target a similarly idiotic term (e.g., hiiilopile).

- Make all the pages as identical as possible, then, alter variables one after the other, experimenting with use of keywords, placement of texts, link structures, formatting, etc.

- Point links at the domain from well-crawled, indexed pages on other domains.

- Make a record of how the pages rank in search engines.

- Now, make little changes to the pages and check how the changes affect the search results so you can be sure of the factors that can move a page up or down.

- Make a record of any result that is effective and test them again with other terms, or on other domains. If numerous tests produce a consistent result, you can assume you have found a pattern used by search engines.

An Example We Tried

We started our test with an assumption, the hypothesis, that a link ranked higher carries better weight than one lower. We experimented this by creating a ludicrous domain that has a homepage with links to three other pages that have a nonsense word appearing once on each page. After the search engines generated results, we discovered the page that has the link at the earliest position ranked first.

Although this is a very informative step, it is not all that a search marketer has to know.

To add more knowledge to the one from this type of testing, great competitive intelligence can also be gleaned by search marketers when they study how search engines work through patent applications made by the big search engines to the United States Patent Office. The most popular among these would likely be the system that enhanced the rise of Google in the Stanford dormitories around the late 1990s, called PageRank, and documented as Patent #6285999: A paper on the subject –Anatomy of a Large-Scale Hypertextual Web Search Engine–is also very important for extra study. However, no qualms, you don’t have to go take remedial calculus just to understand SEO.